More Agents Won't Save You

Multi-agent coding is finally real enough to be useful, but only if you stop treating it like "more agents = more output".

What actually works is more disciplined than that:

- split work into vertical slices

- keep each slice independently testable

- run a tight red/green/refactor loop inside each slice

- group slices into waves

- add guard steps before the next wave

- keep a human orchestrator responsible for integration, judgment, and course correction

That is the workflow I keep coming back to, whether the tooling is Claude Code agent teams, Codex multi-agent workflows, or plain old terminal panes with separate worktrees.

The key idea is simple: parallelism is useful, but only if you preserve feedback loops.

Why This Matters Now

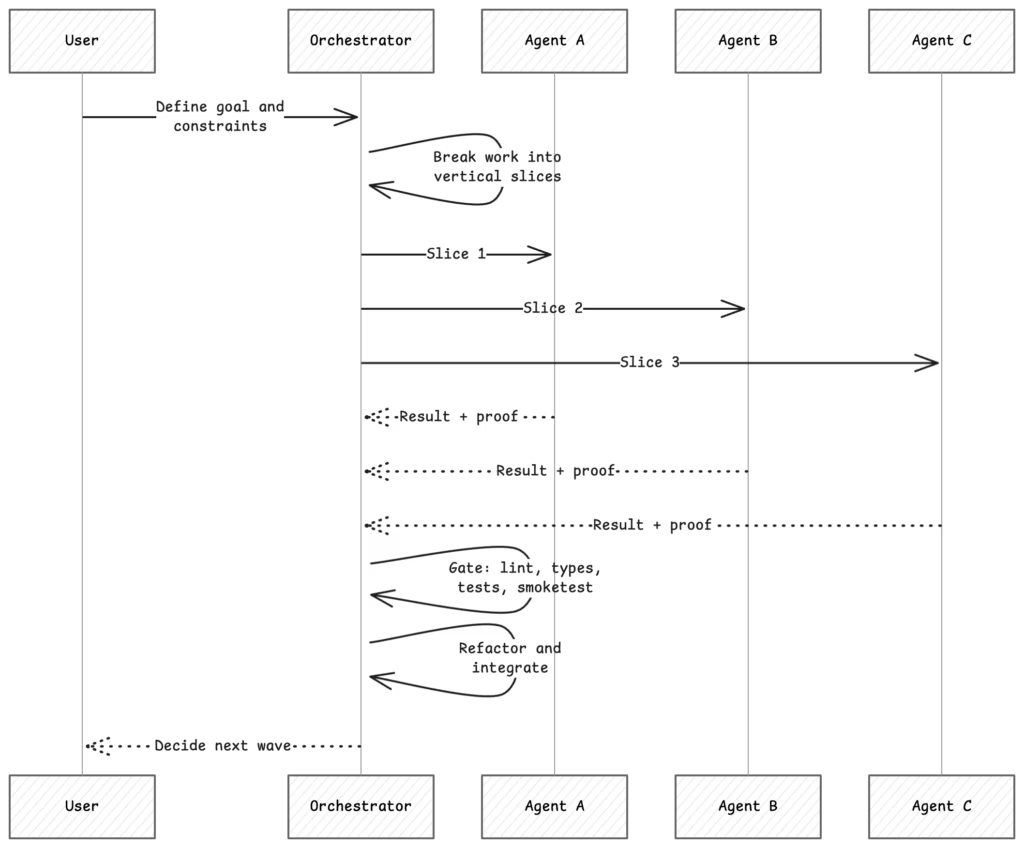

Both Anthropic and OpenAI now expose explicit multi-agent workflows:

- Claude Code agent teams are designed for multiple sessions with a lead and teammates, with direct inter-agent communication and a shared task list. Anthropic explicitly frames them as best for research, reviews, new modules, and cross-layer work where parallel exploration adds value.

- Codex multi-agent workflows let the main agent spawn specialized agents in parallel, collect summarized results, and keep noisy intermediate work off the main thread.

- Both platforms also quietly point toward the same operating model: parallelize independent work, then centralize synthesis. Anthropic even documents hook points for blocking task completion or sending teammates back to work, which is basically a built-in place to enforce quality gates.

That last point matters more than it sounds. OpenAI's Codex concepts docs explicitly call out context pollution and context rot: if you dump logs, notes, stack traces, and half-finished exploration into the main thread, the session gets less reliable over time. Their recommended answer is to offload noisy work to sub-agents and bring back summaries instead of raw junk. If you want the longer research backdrop on this, read Chroma's paper on Context Rot.

This matches what many of us have learned the hard way: if the main thread becomes a landfill, the system stops thinking clearly.

For a good long-form conversation on the agentic loop side of this, the Lex Fridman interview with Peter Steinberger is worth watching: https://www.youtube.com/embed/YFjfBk8HI5o

The Real Unit of Parallel Work Is the Vertical Slice

A lot of agent failures come from assigning work in horizontal layers:

- one agent does schema

- one agent does API

- one agent does UI

- one agent does tests

That looks parallel on paper. In practice it creates handoff debt, merge conflicts, and ambiguity about whether anything actually works end-to-end.

The better unit is a thin vertical slice:

- one behavior

- one observable outcome

- one acceptance test

- one narrow blast radius

In my own workflow, that usually means every slice should be demoable, reviewable, and verifiable on its own. If a slice cannot be tested independently, it is usually too wide.

This is also why skills and worktree-based setups matter. A good skill is not just a macro. It is a boundary. It narrows the job, encodes the operating procedure, and reduces how much improvisation the agent has to do. The same is true of one-issue-per-worktree setups, proof-oriented review flows, and repo-local rules like the ones behind this repo's linear-workflow, worktree conventions, and reflect habit.

Red, Green, Refactor Is Even More Important With Agents

Matt Pocock made the point well in his TDD workflow write-up and video lesson: agents tend to produce worse results when asked to write a whole feature and then test it afterward. That encourages horizontal slicing and imagined coverage.

If you want the video version directly in the article, here is a linked thumbnail to the AI Hero lesson:

The safer loop is the classic one:

Red: write one failing test for the next bit of behavior.Green: write the minimum implementation to make it pass.Refactor: clean up while the test suite stays green.- Repeat.

Martin Fowler's TDD write-up describes the same cycle as the heart of TDD: write a test for the next bit of functionality, write code until the test passes, then refactor both new and old code into a better shape.

For agents, this is not just software craft purity. It is a control system.

Why it works:

- it constrains the task size

- it creates a concrete feedback signal

- it limits hallucinated implementation

- it reduces the odds of the agent "cheating" by drifting the test to match broken code

- it gives the orchestrator a natural checkpoint after every meaningful step

When people say agents work best on "small tasks", this is often what they actually mean: tasks that still close the loop fast enough to produce trustworthy feedback.

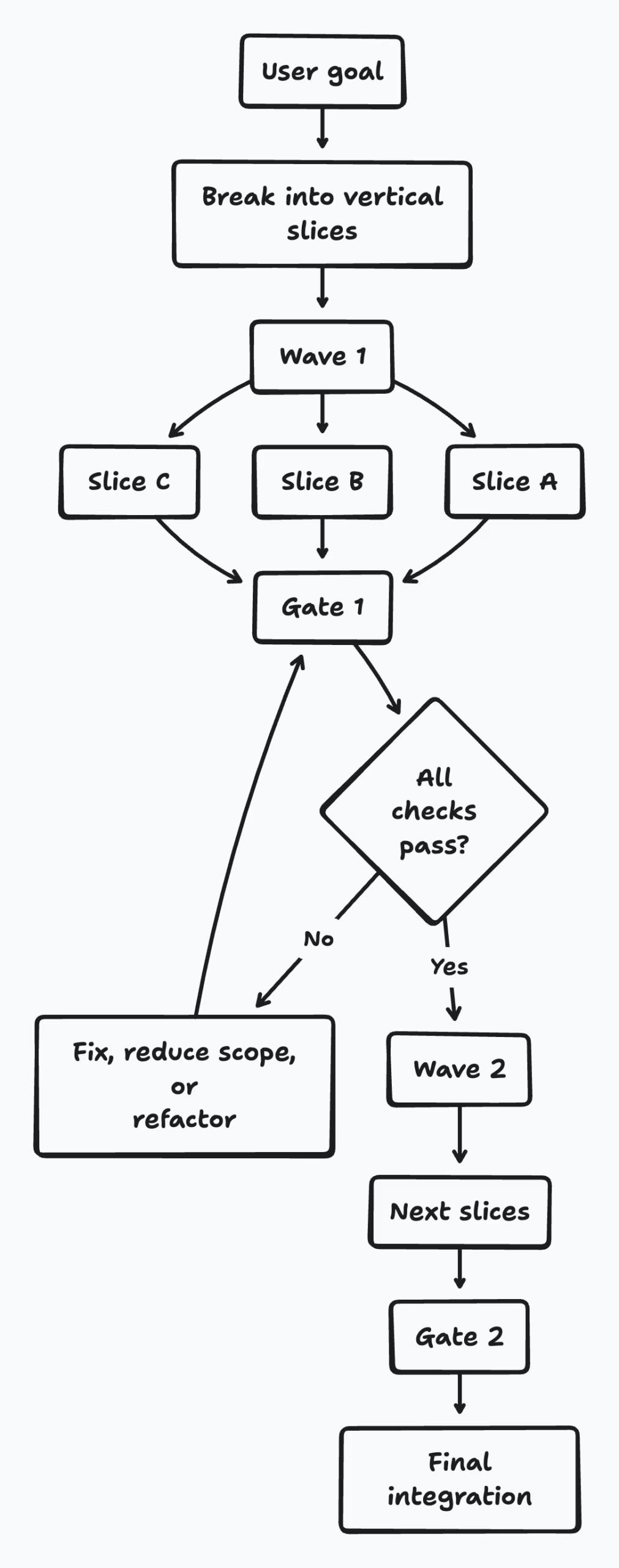

Waves and Gates Beat Unbounded Parallelism

The pattern that has worked best for me is to divide work into waves, then put a guard step between waves.

Within a wave, slices should be parallel-safe:

- separate files or directories

- clear ownership

- minimal hidden dependency between slices

- obvious verification for each slice

Between waves, the orchestrator runs a gate:

- merge or integrate the completed work

- run lint, typecheck, and tests

- run a smoke check if UI or runtime behavior changed

- inspect whether the architecture still makes sense

- decide whether to refactor before expanding the surface area

That gate is where you close the loop.

This is close to how I think about agent teams in practice:

- the wave creates concurrency

- the gate restores coherence

Without the gate, you do not have a workflow. You have fan-out and hope.

"Closing the Loop" Is the Right Mental Model

Peter Steinberger uses language around the agentic loop and closing the loop that I think gets at something important. His Lex conversation page, the full transcript, and related essays like Just Talk To It and My Current AI Dev Workflow all point in the same direction: autonomy gets more useful when the surrounding loop gets tighter.

In his conversation with Lex Fridman, the discussion is not just about letting agents run. It is about building the surrounding loop: queuing, memory, harnesses, follow-up actions, and the tricky balance between autonomy and human oversight. Lex explicitly frames the question as the tension between keeping a human in the loop and creating an agentic loop that is highly autonomous.

That is exactly the design problem.

For coding workflows, "closing the loop" means at least four things:

- The agent gets a bounded task with a real verification target.

- The result gets checked by something external to the agent's own narration.

- The orchestrator decides whether to integrate, refactor, retry, or stop.

- The learning gets captured so the next loop is cheaper and sharper.

That last step is underrated. In my own repo workflow, that can mean:

- updating repo rules

- tightening a skill

- improving a prompt template

- preserving a useful command or proof checklist

If you do not feed the lesson back into the system, you are not really closing the loop. You are just surviving one run at a time.

A Practical Multi-Agent Pattern

Here is the operating model I would recommend for real work:

A few practical rules:

- Use parallel agents mostly for read-heavy exploration, bounded implementation, review, and verification.

- Avoid parallel writes to the same file.

- Keep 3-5 active workers unless the tasks are extremely uniform.

- Prefer one clear owner per slice.

- Do not let the main thread drown in worker output. Ask for summaries and proof.

- Refactor between waves, not only at the very end.

Example 1: Shipping a Search Filter Feature

Imagine a product task like this:

Add a "family-friendly" filter to event discovery, including ranking effects, UI state, and analytics.

Bad split:

- Agent 1: database

- Agent 2: backend

- Agent 3: frontend

- Agent 4: tests

Better split:

Wave 1

- Slice A: represent

familyFriendlyin the search contract and verify it flows through the API - Slice B: expose the filter in the UI and verify it survives reload/share state

- Slice C: add ranking behavior for the filter and prove relevant results move upward

Gate 1

- typecheck

- API tests

- UI smoke test

- manual or scripted verification of search behavior

Wave 2

- Slice D: analytics and observability

- Slice E: docs and reviewer proof

Why this split works:

- each slice is independently testable

- each slice has a visible user outcome

- the gate happens before more polish work accumulates

Example 2: A Mid-Sized Production Repo

A mid-sized production repo naturally suggests multi-agent work when it already has strong procedural boundaries:

- a workflow tool pushes work into vertical slices, waves, and gates

- worktree conventions isolate branches and local environments

- a smoke-first testing harness supports UI verification

- recording and asset-upload tools turn verification into reviewable artifacts

- a "reflect" step closes the session by turning lessons into durable rules

- domain skills such as scraper, deployment, observability, and feature-port work encode specialized operating procedures

That means you can give agents jobs like:

- one agent ports a feature view

- one agent writes the smoke path

- one agent captures proof artifacts

- one agent updates the docs

That is not "AI doing random stuff in parallel". It is a small production system with explicit contracts between workers.

Example 3: An Operations Task

This pattern is not just for features.

Say you want to investigate a production issue:

Search latency spiked after a deploy and users report mixed relevance quality.

Useful split:

- Agent 1: review logs and traces

- Agent 2: inspect ranking changes and feature flags

- Agent 3: compare recent deploys and config drift

- Agent 4: draft the rollback/fix options

Gate:

- synthesize evidence

- decide on rollback vs patch

- run the lowest-risk fix

- verify the result

- record the operational learning

This is exactly where agent teams shine: competing hypotheses, read-heavy investigation, and fast convergence. It is also exactly where they fail if everyone edits production code at once.

Prompting for This Style of Work

The most useful prompts are not magical. They just encode the workflow.

Multi Agent Team prompt

Create an agent team for this task.

Break the work into vertical slices, not horizontal layers.

Each slice must be independently testable and have a clear owner.

Work in waves.

After each wave, stop for a gate:

- summarize what changed

- list proof for each slice

- run lint/typecheck/tests or the closest equivalent

- identify refactors before starting the next wave

Avoid overlapping file ownership.

Prefer small, end-to-end slices over large component buckets.

Where This Works Best

This workflow is a great fit when:

- the work can be decomposed into low-collision slices

- verification is cheap and reliable

- multiple hypotheses are worth testing in parallel

- there is clear orchestrator ownership

It is a bad fit when:

- the task is fundamentally sequential

- multiple workers need to edit the same hot files

- the system lacks tests, smoke paths, or observability

- nobody is available to synthesize and make decisions at the gates

The orchestrator is not optional. If nobody owns synthesis, the team becomes a very expensive source of inconsistent drafts.

The Bigger Shift

The interesting change is not that coding tools can now spawn helpers.

It is that software work starts to look more like managing a compact engineering system:

- architecture as task design

- prompts as operating procedures

- tests as control surfaces

- gates as quality checkpoints

- rules and skills as organizational memory

That is why I think "closing the loop" is the right phrase.

The goal is not to maximize agent activity.

The goal is to create a loop where decomposition, execution, verification, refactoring, and learning reinforce each other. Once you have that, the extra agents help. Without it, they just make the mess faster.

Further Reading

Official docs

- Claude Code: Orchestrate teams of Claude Code sessions

- OpenAI Codex: Multi-agents

- OpenAI Codex Concepts: Multi-agents

TDD and red/green/refactor

Context management and parallelism

Peter Steinberger / closing the loop

- Lex Fridman Podcast #491 with Peter Steinberger

- Transcript: Peter Steinberger on the agentic loop, human-in-the-loop balance, and running multiple agents

- Peter Steinberger: Just Talk To It - the no-bs Way of Agentic Engineering

- Peter Steinberger: My Current AI Dev Workflow